Written by on 07/14/2017

One of the first things I stumbled upon when I started my first Node.js project was how to handle the communication between the browser (the client) and my middleware (the middleware being a Node.js application using the CUBRID Node.js driver (node-cubrid) to exchange information with a CUBRID 8.4.1 database).

I am already familiar with AJAX (btw, thank God for jQuery!!) but, while studying Node.js, I found out about the Socket.IO module and even found some pretty nice code examples on the internet... Examples which were very-very easy to (re)use...

So this quickly becomes a dilemma: what to choose, AJAX or sockets.io?

Obviously, as my experience was quite limited, first I needed more information from out there... In other words, it was time to do some quality Google search :)

There’s a lot of information available and, obviously, one would need to filter out all the “noise” and keep what is really useful. Let me share with you some of the goods links I found on the topic:

- http://stackoverflow.com/questions/7193033/nodejs-ajax-vs-socket-io-pros-and-cons

- http://podefr.tumblr.com/post/22553968711/an-innovative-way-to-replace-ajax-and-jsonp-using

- http://stackoverflow.com/questions/4848642/what-is-the-disadvantage-of-using-websocket-socket-io-where-ajax-will-do?rq=1

- http://howtonode.org/websockets-socketio

To summarize, here’s what I quickly found:

- Socket.IO (usually) uses the persistent connection between the client and the server (the middleware), so you can reach a maximum limit of concurrent connections depending on the resources you have on the server side (while more AJAX async requests can be served with the same resources).

- With AJAX you can do RESTful requests. This means that you can take advantage of existing HTTP-infrastructure like e.g. proxies to cache requests and use conditional get requests.

- There is more (communication) data overhead in AJAX when compared to Socket.IO (HTTP headers, cookies etc.)

- AJAX is usually faster than Socket.IO to “code”...

- When using Socket.IO, it is possible to have a two-way communication where each side – client or server - can initiate a request. In AJAX, it is only the client who can initiate a request!

- Socket.IO has more transport options, including Adobe Flash.

Now, for my own application, what I was most interested in was the speed of making requests and getting data from the (Node.js) server!

Regarding the middleware data communication with the CUBRID database, as ~90% of my data access was read-only, a good data caching mechanism is obviously a great way to go! But about this, I’ll talk next time.

So I decided to put up their (AJAX and socket.io) speed to test, to see which one is faster (at least on my hardware & software environment)....! My middleware was setup to run on an i5 processor, 8GB of RAM and an Intel X25 SSD drive.

But seriously, every speed test and, generally speaking, any performance test depends so much(!) on your hardware and software configuration, that it is always a great idea to try the things on your own environment, rely less on various information you find on internet and more on your own findings!

The tests I decided to do have to meet the following requirements:

- Test:

- AJAX

- Socket.IO persistent connection

- Socket.IO non-persistent connections

- Test 10, 100, 250 and 500 data exchanges between the client and the server

- Each data exchange between the middleware SERVER (a Node.js web server) and the client (a browser) is a 4KBytes random data string

- Run the server in release (not debug) mode

- Use Firefox as the client

- Minimize the console messages output, for both server and client

- Do each test after a client full page reload

- Repeat each test at least 3 times, to make sure the results are consistent

Testing Socket.IO, using a persistent connection

I've created a small Node.js server, which was handling the client requests:

And this is the JS client script I used for test:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

|

var socket = io.connect(document.location.href);socket.on('you_have_data', function (idx, data) { var end_time = new Date(); total_time += end_time - start_time; logMsg(total_time + '(ms.) [' + idx + '] - Received ' + data.length + ' bytes.'); if (idx++ < countMax) { setTimeout(function () { start_time = new Date(); socket.emit('send_me_data', idx); }, 500); }}); |

Testing Socket.IO, using NON-persistent connection

This time, for each data exchange, I opened a new socket-io connection.

The Node.js server code was similar with the previous one, but I decided to send back the client data immediately after connect, as a new connection was initiated every time, for each data exchange:

|

1

2

3

|

io.sockets.on('connection', function (client) { client.emit('you_have_data', random_string(4096));}); |

The client test code was:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

|

function exchange(idx) { var start_time = new Date(); var socket = io.connect(document.location.href, {'force new connection' : true}); socket.on('you_have_data', function (data) { var end_time = new Date(); total_time += end_time - start_time; socket.removeAllListeners(); socket.disconnect(); logMsg(total_time + '(ms.) [' + idx + '] - Received ' + data.length + ' bytes.'); if (idx++ < countMax) { setTimeout(function () { exchange(idx); }, 500); } });} |

Testing AJAX

Finally, I put AJAX to test...

The Node.js server code was, again, not that different from the previous ones:

|

1

2

|

res.writeHead(200, {'Content-Type' : 'text/plain'});res.end('_testcb(\'{"message": "' + random_string(4096) + '"}\')'); |

As for the client code, this is what I used to test:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

function exchange(idx) { var start_time = new Date(); $.ajax({ dataType : "jsonp", jsonpCallback : "_testcb", timeout : 300, success : function (data) { var end_time = new Date(); total_time += end_time - start_time; logMsg(total_time + '(ms.) [' + idx + '] - Received ' + data.length + ' bytes.'); if (idx++ < countMax) { setTimeout(function () { exchange(idx); }, 500); } }, error : function (jqXHR, textStatus, errorThrown) { alert('Error: ' + textStatus + " " + errorThrown); } });} |

Remember, when coding together AJAX and Node.js, you need to take into account the you might be doing cross-domain requests and violating same origin policy, therefore you should use the JSONP based format!

Btw, as you can see, I quoted only the most significant parts of the test code, to save space. If anyone needs the full code, server and client, please let me know – I’ll be happy to share them.

OK – it’s time now to see what we got after all this work!

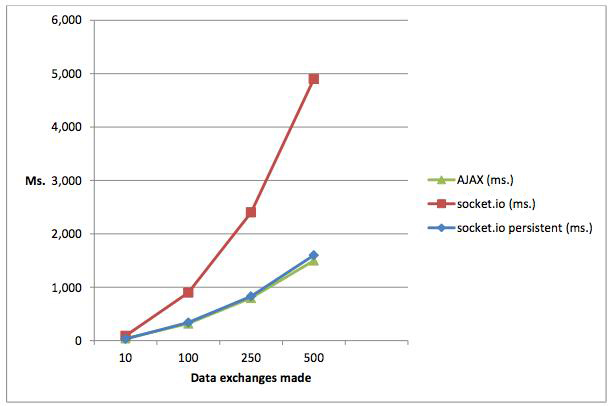

I have run each test for 10, 100, 250 and 500 data exchanges and this is what I got in the end:

| Data exchanges | Socket.IO NON-persistent (ms.) | AJAX (ms.) | Socket.IO persistent (ms.) |

|---|---|---|---|

| 10 | 90 | 40 | 32 |

| 100 | 900 | 320 | 340 |

| 250 | 2,400 | 800 | 830 |

| 500 | 4,900 | 1,500 | 1,600 |

Looking into the results, we can notice a few things right away:

- For each type of test, the results behave quite linear; this is good – it shows that the results are consistent.

- The results clearly show that when using Socket.IO non-persistent connections, the performance numbers are significantly worse than others.

- It doesn’t seem to be a big difference between AJAX and the Socket.IO persistent connections – we are talking only about some milliseconds differences. This means that if you can live with less than 10,000 data exchanges per day, for example, there are high chances that the user won’t notice a speed difference...

The graph below illustrates the numbers I obtained in test:

...So what’s next...?

...Well, I have to figure out what kind of traffic I need to support and then I will re-run the tests for those numbers, but this time excluding Socket.IO non-persistent connections. That’s because it is obvious that I need to choose between AJAX and persistent Socket.IO connections.

And I also learned that, most probably, the difference in speed would not be as much as one would expect... at least not for a “small-traffic” web site, so I need to start looking into other advantages and disadvantages for each approach/technology when choosing my solution!

P.S. Here are a few more nice resources to find interesting stuff about Node.js, Socket.IO and AJAX: